Our narratives of Man versus Machine focus on Machine becoming Man, then surpassing Him.

Man is something that shall be overcome. Man is a rope, tied between beast and overman-a rope over an abyss. What is great in man is that he is a bridge and not an end. (Zarathustra, thus imaginarily reported by Friedrich Nietzche)

The AlphaGo documentary is about Man qua Man[1], or, more precisely, about one man by the name of Lee Sedol, who has a soft, high-pitched voice, a wife, and a daughter. In March of 2016, Sedol went from being well known to Go fans to being well known to everyone after losing 4 out of 5 games to AlphaGo, a computer built by machine learning engineers at Deepmind.

Here is what the film beckoned me to see, feel, and infer.

1. Fear eats Man’s mind

And thus the native hue of resolution/ Is sicklied o’er with the pale cast of thought (Hamlet, Act III, Scene I) [2]

Sedol is a champion. He has cultivated excellence, put in his 10,000 hours of practice. Played game after game after game to get where he is today, working humbly and patiently with his coach. Playing Go the way he plays is an act of respect towards his elders, his nation, his family.

For Sedol, therefore, the match against AlphaGo was much more than a match. It was the appointed time to exhibit elegance, grace, and creativity above and beyond standard play. The moment when he left the hallowed halls of practice to squint into the harsh lights of the stage. When they applauded. When he bowed, and, lifting his as slowly as possible to protract time into the infinite dilation of Cantor’s continuity, pupils dilating into eye drop blurs, seconds half-lived to infinitesimals, further, until he couldn’t stop it anymore, until, as raised his head back up, he noticed his sense of self had changed, he observed himself being observed, knowing everyone was watching, rid himself of the caterpillar cloak called Lee Sedol to stretch his powdery wings as Man. He had become an allegory of human intelligence pitted against the machine.

No biggie. You got this. Just a little blip in history. Just a game. Underwear. Chickens in underwear with scraggly little legs hobbling under the weight of tubby guts bloated with donuts and Budweiser. Just like yesterday when no one was watching.

What a horrible place to be.

And yet, we honor it. We honor the resilience of the golfer who keeps his cool after a dud shot hooks way too far left. We honor the focus of the concert violinist who can make her way through the Mephistophelian haze of a Paganini caprice. We honor the ease excellent TED Talk speakers find when they share an idea they believe in. We honor it because we know how hard it is. Because we recognize that the difference between good and excellent is the fortitude of practice and the gumption to keep the mind in check, to settle its sabotage, to focus.

We are all Hamlet. Some of us more than others.

Sedol is also Hamlet. The documentary does a marvelous job eliciting our empathy as we watch him doubt, furrow, fear, apologize, strategize, wrestle with the pastiche reflection of what he could have done, who he could have been, how the narrative could have gone if only he had done this move instead of that move. We never hear the voices in his head but we can infer their clamor: “calm down, stay here, focus.” Sedol plays the game in context. He knows the stakes of the match and has no choice but to devote a portion of his brain to the everything else that is not the local task. It’s plausible that only 30% of his brain power could be devoted to the actual game.

AlphaGo has no voices in its head. It has no runaway probabilities. The only probabilities it calculates span the trees it searches to find the next move and win.

2. Man is a social animal who relies on nonverbal communication

Man is by nature a social animal; an individual who is unsocial naturally and not accidentally is either beneath our notice or more than human. Society is something that precedes the individual. Anyone who either cannot lead the common life or is so self-sufficient as not to need to, and therefore does not partake of society, is either a beast or a god. (Aristotle, Politics) [3]

AlphaGo has no hands. It has no face. Unless Deepmind decides to embody future versions in a robot somersaulting down the uncanny valley, it will never feel the silky lamination of a Go stone, never calm its nerves by methodically circling the stone between the pads of its right thumb and index finger as it contemplates its next move.

Like the infamous Godot in Samuel Beckett’s play, in the documentary, AlphaGo feels more like a prop than a character. It’s undoubtedly there, ubiquitous, but somehow also absent. Sedol engages with AlphaGo through a ventriloquist named Aja Huang, a Taiwanese computer scientist on the DeepMind team who is also an amateur 6-dan Go player. Sedol never engages with AlphaGo directly: only with its diplomat, its emissary.

Huang is no throw-away character. The ventriloquist could have been anyone: his task was to look at the digital display indicating AlphaGo’s move and translate this to the physical board by placing the stone in the right place. He could have carried out this task with zero knowledge of what it meant. Brawn without brains. Pure, robotic execution.

The positions of the stones mean something to Huang. He bridges two ways of seeing the game, like a computer scientist charting probabilities and like a Go player strategizing moves.

And this means that his face could have relayed emotional content back to Sedol, allowing the champion to plunder the emotional cues that are such an integral part of the game. In the first match, Sedol felt alienated because when he looked up at Huang to gather information from his temples, eyebrows, forehead, pupils, cheeks, lips, chin, elbows, freckles, arm hairs, face hairs, eyes, sweat beads, breath, aura, the signals were absent. Huang didn’t exhibit the weight of concentration or even the active restraint of a bluff. It was almost worse that he wasn’t just a robot man because he had enough knowledge to lead Sedol to anticipate emotional cues but fell short because his ego wasn’t engaged. He was, in the end, only an observer. The stage shifted to a theater of deliberate alienation, as in the movie The Lobster.

This inverted uncanny valley tells us something about how we communicate. It’s cliché to underscore the importance of nonverbal communication, but it was quite powerful to see how much Sedol typically relies upon emotional cues as animal, as mammal, when he plays against a normal opponent, and how the absence of those cues threw him off. I suspect some of the reticence we feel around trust and explainability stems from our brains processing the world as animals. We don’t actually require explanations from people to trust them and obey them. Power and persuasion seep through different seams.

3. Computer scientists and subject matter experts see the same thing different ways

While Huang speaks neural network and speaks Go, most of the DeepMind scientists lack the same bilingual subject matter expertise (I may be incorrect, but I’m pretty sure not everyone who worked in AlphaGo knows the game). Indeed, one fascinating aspect of contemporary machine learning is that the systems can learn what aspects of the data are relevant for a prediction or classification task rather than having a person apply their knowledge to hand pick which aspects will be most relevant. This is not universally the case, and it’s not to denigrate the value of subject matter expertise: on the contrary, there is excellent research afoot to make it easier for people with subject matter expertise in some domain-be that cancer diagnostics or fashion taste or 50-years of experience tweaking knobs to offset the quirks of an office building in lower Manhattan-to represent their knowledge as distributions and parameters without needing to be a scientist do to so. But a characteristic of the deep learning moment is that a crafty scientist can consider a problem abstractly, move away from the particular details we observe as the problem’s phenotype (e.g., a move in the game of Go) and focus on the mathematical underpinnings of the problem (e.g., the number of hidden layers or some other architectural choices to make in a neural network). Add to this that what makes a machine learning problem a machine learning problem is that there is too much variance for us to deterministically write out all the rules: instead, we provide primers that enable the system to iterate fast (that’s where we need all the computational power) to map inputs to outputs until the mapping works well most of the time. It’s like selecting the yeast that will yield the best bread.

Programs for playing games often fill the role in artificial intelligence research that the fruit fly Drosophila plays in genetics. Drosophilae are convenient for genetics because they breed fast and are cheap to keep, and games are convenient for artificial intelligence because it is easy to compare a computer’s performance on games with that of a person. (John McCarthy and Ed Feigenbaum, Tribute to Arthur Samuel)

The AlghaGo documentary did a wonderful job juxtaposing how computer scientists tracked the game’s progress and how Sedol and the Go commentators tracked the game’s progress. The scientists viewed the game mathematically, as a series of abstract scores and probabilities. The players viewed the game phenotypically, as a series of moves on the board. It was two fundamentally different ways of viewing the same problem, illustrating the silos of communication companies that quickly emerge like tectonic plates shooting mountain sprouts in any enterprise. The endgame for the opponents was also quite different. The AlphaGo team was fundamentally interested in using Go as a testing ground for computational possibility, the particular use case required to explore the larger problem of building a system that can act intelligently. Sedol was fundamentally interested in playing perfect Go, and potentially abstracting lessons from play to other aspects of his life. These conflicting endgames are often at work in the dialectic of innovation, yin and yang dancing drunk through the discrete step changes of technological progress.

I do wonder if we could rewrite the narrative of Man versus Machine as one of two different ways of creating, encapsulating, and sharing knowledge. The documentary made this about Demis Hassabis and the AlphaGo Team versus Sedol, West versus East, traditional culture versus computer science, two ways of representing knowledge and viewing the world. It’s ultimately a more grounded narrative. In our HBR Ideacast episode, I suggested to host Sarah Green Carmichael that it’s helpful to reframe a supervised learning system as “one human judgment versus the statistical average of thousands of human judgments,” and then ask which one you’d rather rely on. Granted, the new AlphaGo Zero system is one of self play, not one that mines past human judgment. But the yeast primer is still coded and crafted by human minds with a particular way of framing problems as engineered mathematical models.

4. Algorithms change how Man makes sense of the world

In a 2011 TED Talk, Kevin Slavin explained how trading algorithms have reshaped the physical landscape (we build structures to transmit the fastest signal possible so our algos can outcompete one another by fractions of a second). In a 2018 phone conversation, my partner John Frankel at ffVC helped me crystallize my understanding that task-specific machine learning algorithms are poised to reshape-if not already actively reshaping-our cognitive landscape.[4]

Much of the language used to describe AlphaGo betokens alienness and strangeness, facets of thought that are not only not human but antihuman. From a 2017 Atlantic article:

Since May, experts have been painstakingly analyzing the 55 machine-versus-machine games. And their descriptions of AlphaGo’s moves often seem to keep circling back to the same several words: Amazing. Strange. Alien.

“They’re how I imagine games from far in the future,” Shi Yue, a top Go player from China, has told the press. A Go enthusiast named Jonathan Hop who’s been reviewing the games on YouTube calls the AlphaGo-versus-AlphaGo face-offs “Go from an alternate dimension.” From all accounts, one gets the sense that an alien civilization has dropped a cryptic guidebook in our midst: a manual that’s brilliant—or at least, the parts of it we can understand.

AlphaGo makes a few moves in the match versus Sedol that flummox him. As a non Go player, I couldn’t make sense of the moves myself, but relied upon the commentary and interpretation offered by the film. What I took away was the sense that AlphaGo did not rely upon the same leading indicator heuristics that are the common tropes of seasoned Go players. If we think about it, it shouldn’t come as a surprise that the search space of 10172 positions (according to 11th-century Chinese scholar Shen Kuo) contains brilliance that has to date evaded master Go players. But what’s even more interesting is the cultural significance of knowledge transfer from generation to generation. If Go is staging ground for life, then mastery can and should be measured by analogical transferability and applicability. It’s like a pedagogical philosophy that values critical thinking: teach them Shakespeare, teach them whatever nouns you want, but focus on enabling them to transfer the verbs so they can shape shift to solve problems as they arise.

What comes off as alien is a system that is optimized for one task, regardless of analogy and transfer. And, one only win Go by one point, not many. The move with the highest likelihood of winning by the narrowest of margins will look different than the move that betokens potentially less likelihood of success, but a larger cushion. Map this to making big choices in life: most people study the safe subject to keep options open rather than following the risky path of studying what they love. The tradeoffs are different. Their optimizing for different types of outcomes and using a different calculus.

So, following John Frankel, I’d like to propose that our heuristics will change as our minds increasingly engage with tools that optimize ruthlessly against one task. Machine Go is different than Man Go because it’s not designed as a pedagogical tool to teach life lessons. It’s designed to win, designed to exploit the logic of one search space and one game and one set of rules. But that need not be all that bad. There’s something lovely in coming to terms with the fact that success only requires one point, that we need not rely upon the greedy heuristics that are familiar as we navigate the world. What’s deemed as alien is a means of coming to terms with our own predilections to generalize, when it may not always (or often) be the best bellwether of success. It’s the inverse interpretation of Bostrom’s paper clip optimization monster. An invitation for us to ponder our values and ethical stance as we increasingly interact with algorithms geared to optimize without questioning if that’s ultimately what we want and need.

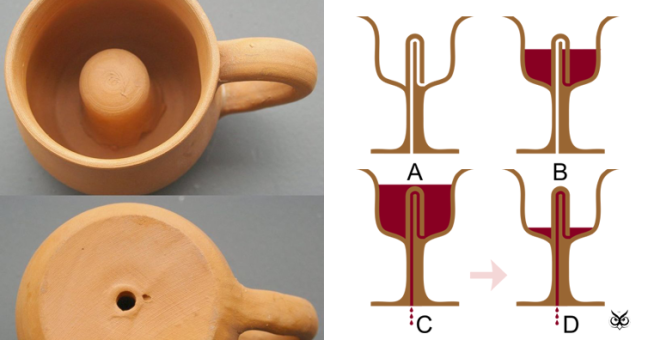

The Pythagorean Cup is at once practical joke, physics lesson, and moral chastiser. If you are greedy and put too much wine into the cup, a siphon effect kicks in and all the liquid drains out. This kind of analogical triple meaning is the opposite of algorithmic thinking in its current form.

Conclusion

The AlphaGo documentary left me feeling empathy and admiration for Lee Sedol. Not as a Go champion, not as an allegory of Man’s Intelligence, but as a man. His humility was beautiful. His striving was admirable. His kindness towards his daughter was noticeable. His Korean duty was evident. He was many features cobbled into a being, with feelings and a heartbeat, and a mind. He learned something from the matches and lost gracefully, shaking Hassabis’ hand as he left the press conference, cameras flashing in his wake.

[1] Gender warriors, please do forgive me. There’s implicit critique about who controls the AI narrative in keeping the reference to Man, and I find the capitalization lends a curious aura of allegory to this post, which is riddled with references to male heroes.

[2] Rainer Werner Fassbinder (whose name I always mistake for Rainer Maria until I remember that’s the other Rainer, the Rilke Rainer) has a marvelous film entitled Angst Essen Seele Auf, translated as Ali: Fear Eats the Soul (which I should be translated as Ali: Fear Eat the Soul to better capture the grammatical error Ali, one of the film’s protagonists, makes when he speaks broken German without conjugating verbs) about an “almost accidental romance kindled between a German woman in her mid-sixties and a Moroccan migrant worker around twenty-five years younger.” While released in 1973, the lessons are all the more relevant today. The other eating metaphor on my mind is Andreessen’s software eating the world, and now Steven Cohen and Matthew Granade saying that models will run the world. What Cohen and Granade get right in their article is that AI systems are about much more than just Jupyter notebooks with models. You have to put models into production, use them as hypothesis to build closed-loop systems that get better as they engage with the world. So, so, so, so, so, so, so many companies still seem to miss this part. It’s hard, and requires work that isn’t deemed sexy by the cognoscenti and the rockstars (how awesome is the word cognoscenti?).

[3] I’ve been thinking a lot about the social value of work and the workplace and have the early, what-my-mind-does-walking-and-running-level intuitions of a blog post about why work is an opportunity to experience positive, Aristotelian freedom (where self-actualization occurs through participation in a common, social goal) versus negative freedom (how we normally conceptualize freedom as the absence of constraint for the individual) and what that means for team and meaning and also the intrinsic value of work (for the leisure promised by some UBI pundits rubs me the wrong way; not all UBI pundits believe self-actualization is an individual project, and the most sober ones think it’s a bump needed to become more socially connected (including Charles Murray, which is interesting…). Stay tuned.

[4] John has an uncanny ability to understand and represent the heart of the matter in emerging technologies. It’s a privilege to learn from him. I’ve mentioned this before on the blog, but John also has the world’s best out-of-office emails, which have inspired my own (mine are far less sardonic and far more earnest, not by choice but by the ineluctable traps of my style).

The featured image is from an article the newspaper Korea Portal posted March 15, 2016. In the article, Sedol says: “I wanted to end the tournament with good results, but feel sad that I couldn’t do it. As I said before, this is not a loss for man, but a loss for me. This tournament really showed what my shortcomings are.” As in the documentary, Sedol interprets his loss as a personal failure. He doesn’t view himself as the representative of mankind. This isn’t man versus machine. It is one match. One man versus his opponent. But because the opponent doesn’t feel like Sedol does, doesn’t care if it wins, it becomes one man versus himself.

One thought on “The AlphaGo Documentary”